Building with Generative AI: The Tricks and Tips You Need to Avoid Common Pitfalls

Ian Massingham

Ian introduces his background in cloud computing, starting at AWS in 2013 and witnessing its rapid European growth. After roles at MongoDB and a data startup, he joined early-stage Griptape. Initially focused on building a general framework for LLM-based applications amid a crowded “agentic systems” boom, the company struggled to differentiate. In 2025, they pivoted to a vertical focus: generative AI tools for artists and VFX professionals. Their product, Griptape Nodes, is a visual, node-based workflow editor that lets non-technical users easily combine advanced AI models for image, video, and audio generation to create high-quality creative assets.

Ian explains that Griptape was recently acquired by Foundry, creators of industry-standard compositing tools like Nuke, used in productions such as Game of Thrones. The acquisition integrates Griptape into a larger VFX ecosystem.

He then shifts to fundamentals of LLMs: they only process and generate tokens based on training data, meaning real-world capabilities (like current info or calculations) come from surrounding applications, not the model itself. Building LLM apps therefore requires additional systems. He emphasizes that most use cases won’t be chat-based, advises careful API and cost choices, and recommends using frameworks to manage models efficiently and sustainably.

Ian explains that LLMs struggle with maths because they are probabilistic token predictors, not true calculators, often producing inconsistent or incorrect answers. Research highlighted their unusual internal reasoning, combining rough estimation with precise digit prediction. He notes additional limitations: non-determinism, outdated knowledge, and inability to access real-time information via APIs alone. These gaps are solved by external tools, which handle tasks like calculations or retrieving current data. LLMs request these tools through applications, creating a back-and-forth process. However, as systems scale, managing many tools becomes complex, leading to “tool resolution” issues where models struggle to choose the correct tool reliably.

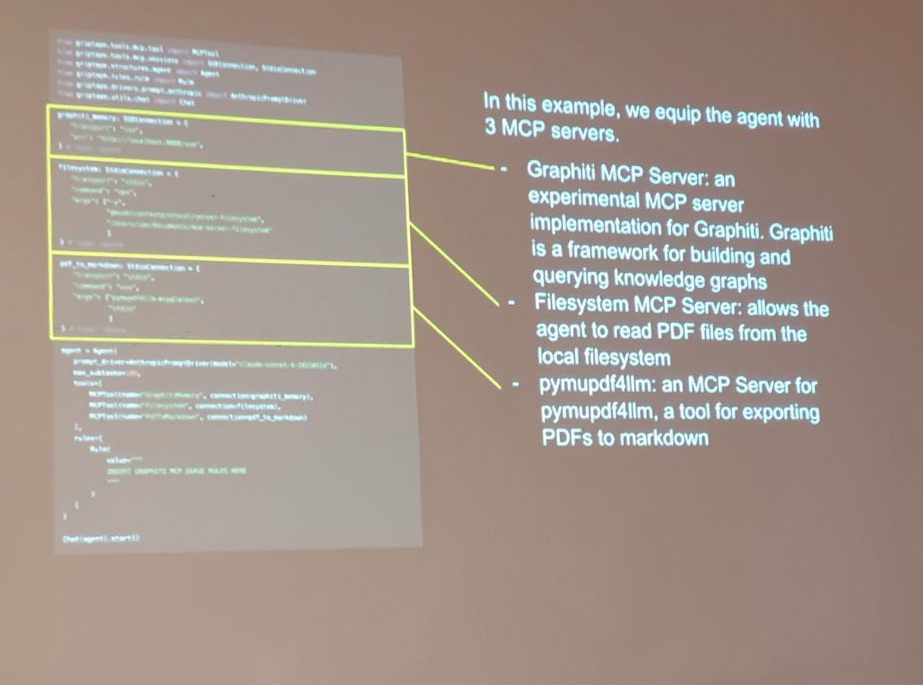

Ian explains that early multi-tool systems became unpredictable, leading to solutions like Anthropic’s Model Context Protocol (MCP), which enables automatic tool discovery and access to resources and pre-defined prompts. MCP allows LLMs to interact with external systems more effectively, improving performance on complex tasks. However, too many MCP servers can still cause confusion. He demonstrates how combining MCP tools with memory systems enables advanced use cases like document analysis and coding automation, dramatically boosting productivity. Finally, he highlights agentic systems: instead of one overloaded agent, multiple specialised agents with focused context and tasks work together more efficiently and reliably.

Ian explains that agentic systems introduce “agency,” meaning decision-making is delegated to AI, which can lead to unpredictable behaviour and repeated actions that increase costs. Since every API call consumes tokens, large or inefficient systems can become expensive quickly, making observability essential to track usage and identify cost drivers. He warns that powerful models and media generation can escalate costs at scale. While frameworks and tools help manage this, developers must remain cautious. He also stresses the importance of foundational software engineering skills—like testing, security, and system design—arguing that AI boosts productivity but also amplifies risks if used without proper expertise and discipline.

Ian argues that modernising systems—such as breaking monoliths into service-oriented architectures—makes them easier for agents to interact with. For debugging, strong observability is essential, alongside evaluations (“evals”) to test non-deterministic LLM outputs through repeated, criteria-based scoring. He emphasizes validating both structure and quality before production. Looking ahead, he advocates shifting focus from rapid AI expansion to safety, responsibility, observability, and cost control. While acknowledging heavy investment in AI, he believes its short-term impact is overhyped but long-term impact underestimated. Ultimately, he stresses balancing innovation with responsible use, warning against environmental and societal risks from unchecked AI growth.

Ian says it’s unclear whether AI model prices will fall, as costs depend on both expensive training and ongoing inference, and it’s uncertain how much further capability needs to improve. He notes models are already approaching broad usefulness. On company strategy, he highlights a key lesson: startups often aim too broadly, but success requires tight focus on a specific vertical unless backed by major funding. Griptapesucceeded by concentrating on VFX, identifying gaps in competitors like ComfyUI, and avoiding issues such as restrictive licensing and dependency conflicts—factors that ultimately contributed to making the company an attractive acquisition target.

Ian’s talk was followed by many questions from participants, and full Ian’s answers.